Most AI projects don’t fail because the model is bad. They fail because AI exposes judgement gaps, the inability to ask good questions, nobody inquiring if the model should exist in the first place, etc. To clarify further, many AI transformation projects that fail had the technology working perfectly – but the humans around it didn’t perform or demonstrate the knowledge needed to deliver meaningful value or achieve project success !

How so ? Not because people lacked technical skills – but because AI exposes human skills gaps.

For example –

You can automate a workflow in 30 days.

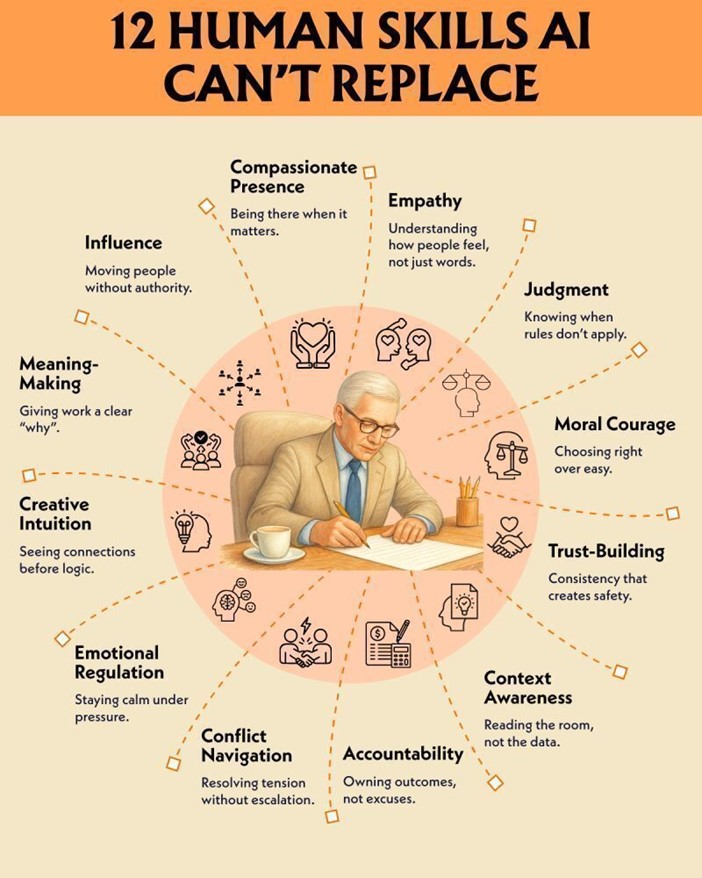

You cannot automate –

– judgement

– good insight

– courage

– morality / ethics / integrity

– the ability to read a situation

…. when the data says one thing and human senses say another !

AI initiatives typically collapse not because the model failed, but because nobody challenged the assumptions, plan, strategy, implementation, etc. That’s not an AI problem. That’s a human problem.

In another example, AI and new process adoption stalled not because the tool was hard to use, but because the leader couldn’t build enough interest and trust to effect the change before resistance set in.

This is a change management challenge – because there is a human awareness and trust-building problem.

Another requirement (that most AI strategies ignore) is the 3C AI Leadership Model™ where it’s essential to have Clarity, Control, and Capability associated with new initiatives.

With this, realize expanding capability includes technical skills + human knowledge, awareness, competencies, influences and the desire to achieve goals associated with the technology and the subject matter.

Interestingly, the leaders winning with AI aren’t the most technical people in the room – rather they are the ones who are good at – judgment calls, building trust, navigating conflict, written and verbal communicators, have strong look ahead, and own outcomes with the technology complimenting people and improving processes.

Fundamentally, recognize AI handles the data, Humans handle the consequences !

And understand with AI, if the goal is to avoid surprises and achieve better outcomes, humans need to drive the process and review outcomes that leverage the following human qualities –

March 20, 2026 by Gabriel Milien / Kary Oberbrunner / CAIL CAIL Innovation commentary

info@cail.com www.cail.com 905-940-9000